Stop Risky AI Decisions at Runtime

Enforce policy in-line so violations are contained before they spread.

Runtime AI Risk is Hard to Contain

Most AI failures look like valid requests until intent, context, or tool access turns a normal workflow into a policy violation.

Injection Breaks Guardrails

Prompts can override intended instructions, trigger disallowed content, or steer workflows into unsafe actions.

Controls Are Scattered

Guardrails live in prompts, app code, and model vendor settings. Security and IT can’t centrally manage enforcement.

Risk Is Contextual

The same workflow can be low risk in one context and high risk in another due to destination, model, or tool parameters.

Agent Automation Expands Blast Radius

When AI touches tools and data, one bad interaction can cascade across systems before anyone notices.

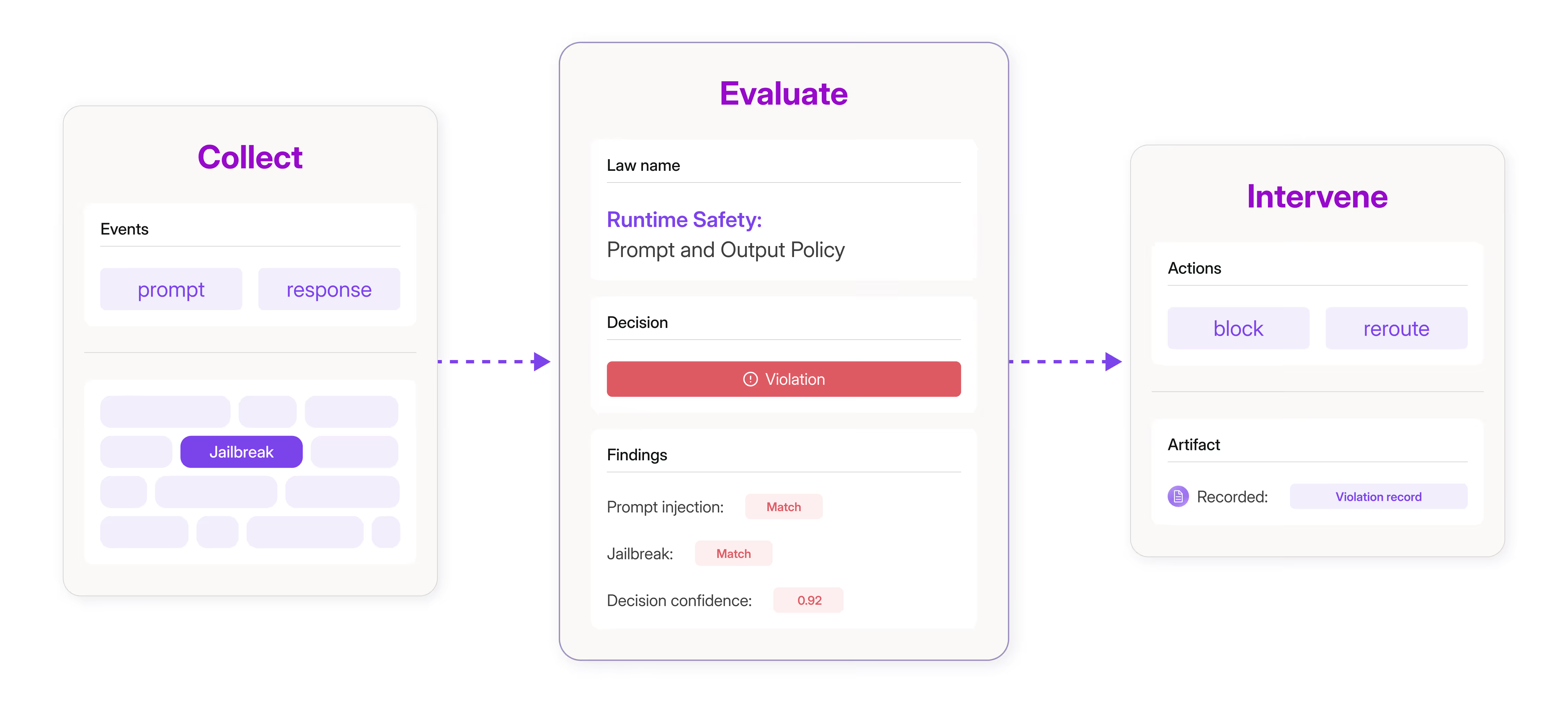

Apply Runtime Safety to Prompts and Outputs

Prompt and response signals are evaluated and recorded as a violation record.

Enforce Policy at the Point of Decision

Make each AI decision policy-aware, with outcomes based on scope and severity.

Inline Policy Evaluation

Evaluate prompts, outputs, and actions against scoped Laws, from fast patterns to model-based checks.

Context-Aware Runtime Decisions

Use the full interaction context, including retrieval snippets and tool parameters, to reduce false positives and catch higher-risk behavior.

Private Deployment Options

Deploy in your VPC or privately hosted environment to meet enterprise data and residency requirements.

Route to SecOps Workflows

Send violations with supporting context into SIEM, SOAR, or ITSM workflows for triage, escalation, and response.

Put Policy in the Path

Enforce Laws across AI applications and agents, scoped by app, route, role, model, and tool.

Prompt Injection Defense

Detect and block prompt injection, jailbreaks, and instruction hijacking in production.

Unsafe Output Guardrails

Prevent prohibited or out-of-scope responses before they reach users.

Acceptable Use Boundaries

Enforce behavior limits by role, app, and model so the same assistant is permitted in one context and restricted in another.

Runaway Agents

Detect looping behavior and abnormal patterns, then throttle or escalate based on policy scope.

Control, Not Just Monitoring

Runtime control that’s enforceable by policy, not hardcoded in prompts.

Intent-Aware Decisions

Evaluate semantic intent and tool-use decisions in-line, not just known patterns or endpoints.

Latency and Precision Dials

Choose patterns, similarity, classifiers, or model-based checks to balance precision, cost, and speed.

Decision Trail Included

Keep what was evaluated, what matched, and what happened next, with context for existing workflows.